Project Category: Software

Join our presentation

About our project

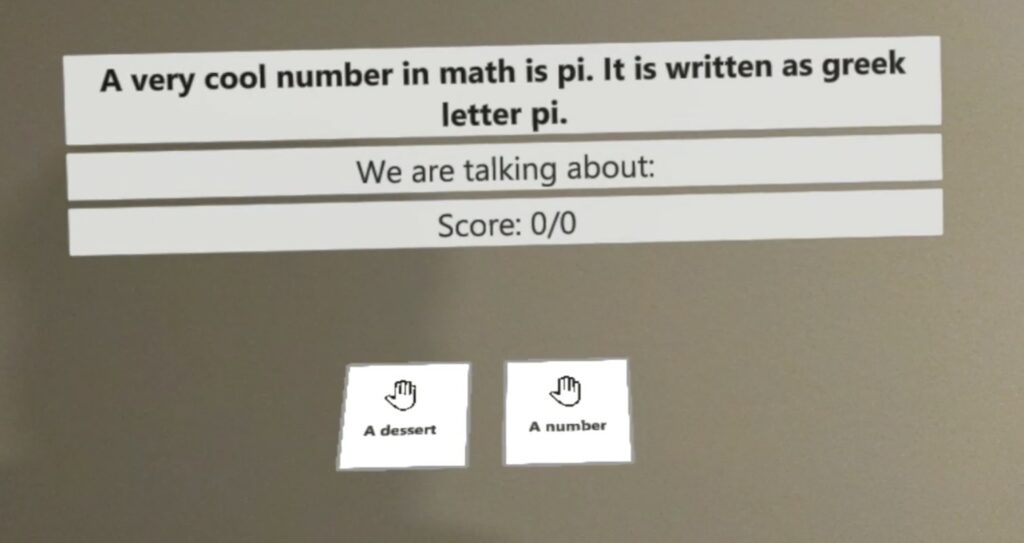

About 30% of autistic students are unable to communicate reliably through speech. This is because they have yet to develop the fine motor skills to verbally communicate. These students have been shown to benefit from other modes of communication. One current solution is to have the student point at a letter board that is held up by a teaching assistant. It is then the teaching assistant’s responsibility to hold the board at the correct distance and orientation for the student to type out their answer. It is also their job to interpret the response.

Unfortunately, not all students from our target population have access to a dedicated communication assistant at school. This is the issue our solution aims to address. Our project focuses on improving the learning outcomes for minimally verbal autistic students. These students understand concepts but struggle with conveying their knowledge. This creates a gap between the student’s ability and their perceived ability. The result of this is that they are typically presented with learning content far beneath their true abilities which leaves the students frustrated and stifles their learning progress.

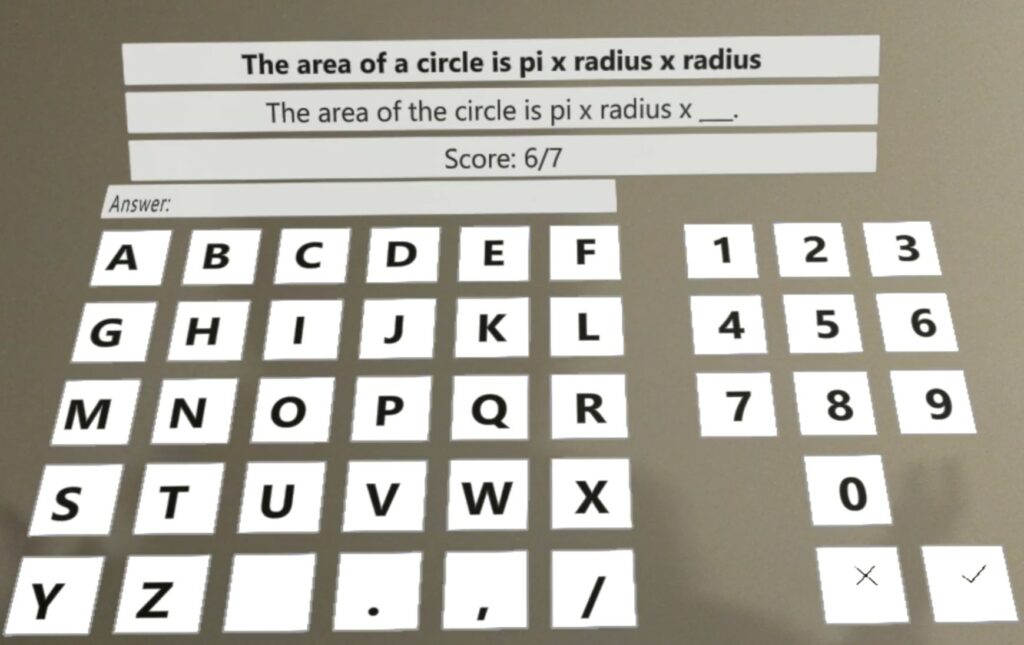

To help close this gap we have developed a teaching solution with the HoloLens2 mixed reality device. Our goal is to help these students learn, show their potential, and develop fine motor skills that will help them communicate through a keyboard-type device. We have developed a holographic input device that will adapt to the student’s range of motion by automatically changing the keyboard’s position and angle. As the user’s pointing skills improve the system will provide them with a more complex input device with more keys until they are eventually using a full keyboard.

Meet our team members

Wafa Anam

I am in my final year of software engineering at the University of Calgary. I have gained proficiency in Object-Oriented programming in C++ and Java through coursework. I also just recently completed a year-long internship where I was exposed to some natural language processing basics using Python, and front-end web development using React and TypeScript. I am passionate about absorbing as many new technical skills as possible while I explore problems that I can empathize with.

Erslan Salman

I am a fourth-year software engineering student at the University of Calgary. I am skilled in many areas of software development, such as software planning, database management, front end, back end, and object-oriented programming. Some specific technologies that I am familiar with are Java, Python, C, C++, C#, Angular, JavaScript, and node.js. I am interested in new and emerging technologies, such as machine learning, artificial intelligence, and Virtual as well as mixed reality technologies. I always enjoy being able to work with technologies on the forefront of innovation.

Sean Mai

I am in my final year of software engineering and have gained experience in academic and professional environments. I have established strong software fundamentals through coursework and online material such as object-oriented programming and software planning. I enjoy learning all facets of software development, working with full stack development through various internships. This includes front-end, backend and dev-ops experience with emphasis on scalability and I have also been exposed to project management of software engineering. I’m passionate about solving problems and finding innovative ways to do that with software. The opportunity to work with new and relevant technologies is also something that excites me about this project.

Andy Tran

I am currently in fourth year Software Engineering at the University of Calgary. My passions in the software engineering field are in game development. I found software engineering to be a satisfying field in which I could pursue my passion for technology while also continually adapting to how technology changes over time. Although my passion lies in game development, I hope to learn all I can about the different types of software development. Software design continues to change as we learn more and I hope to evolve along with it. The languages I use primarily are C++, Java, and JavaScript using Visual Studio Code.

Kyle Friedt

I am in my fourth year of software engineering at the University of Calgary. I have previously completed a degree in Biochemistry at the UofA. After my first degree I worked in sales in marketing for various technology and pharmaceutical companies. I have an interest in concurrent programming and robotics. I have experience in database management, full stack development, and data science. I am familiar with C, C++, Python, Java, Typescript, and Rust. I have also taken a few courses on machine learning, concurrent programming, and data science independently from my University Courses.

Use Cases

Use Case 1

- User Profile: This user has adequate pointing skills, they have used the device quite a few times, but they cannot sit still.

- Scenario: The user is comfortable pointing but they move around a lot

- Solution: The interface follows the user around

Use Case 2

- User Profile: This user is new to using the system they have poor pointing skills and poor range of motion. They struggle to reach some of the buttons.

- Scenario: Simulate someone trying to reach the button but not quite being able to.

- Solution:

- Give them a defined amount of time to try to reach the button

- Record the distance to the button. Maybe record the closest X value and Y value

- Move the keyboard according to these closest values

Use Case 3

- User Profile: This user is new to using the system they have some range of motion issues. They can point but the button needs to be at a specific angle.

- Scenario: Simulate someone who is not comfortable with a flat keyboard.

- Solution:

- Record hand angle when pointing

- Adjust the angle of the keyboard to match the hand angle

Use Case 4

- User Profile: This user has trouble pointing at an object they are looking at.

- Scenario: Simulate someone selecting a button but looking at another one

- Solution:

- Log the last button that they looked at when a button is clicked

- Need a script that will get the last object that was looked at when the button is clicked.

Details about our design

HOW OUR DESIGN ADDRESSES PRACTICAL ISSUES

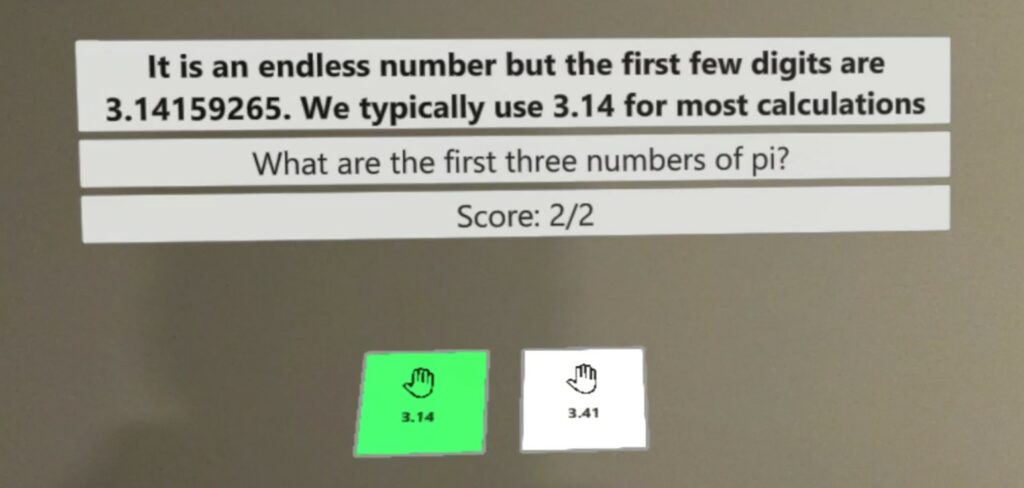

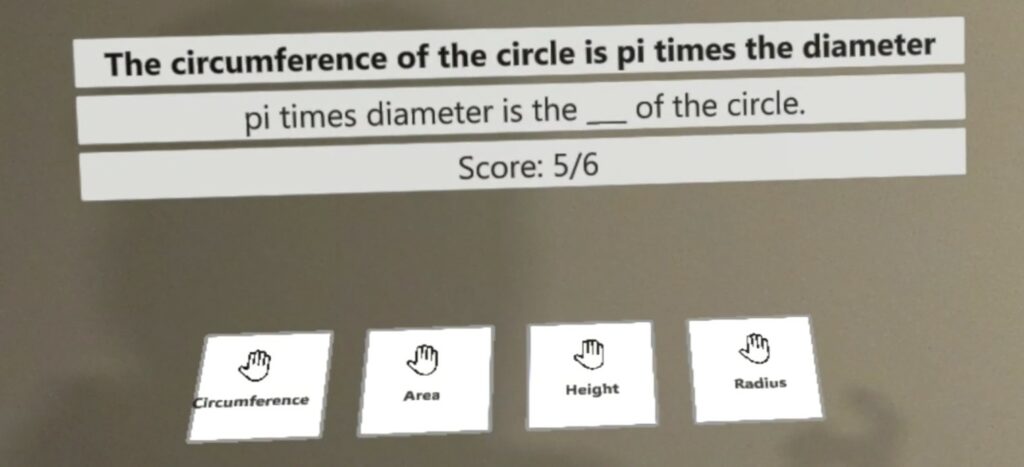

Minimally verbal autistic students struggle with verbal communication and to assist them in the classroom environment we have developed a virtual input system so they can submit answers and demonstrate their understanding comfortably.

Another consideration was the motor ability of the students. To adapt to this, we developed a system to adjust the placement of the input device to compensate for the user’s hand placement so they can select inputs comfortably with less fatigue.

These students move around and turn often and to combat this the input device and display follows the user’s headset relative to their movement, whether they turn or move.

WHAT MAKES OUR DESIGN INNOVATIVE

Our design utilizes mixed reality using the Microsoft Hololens 2. This allowed us to create a virtual solution that is merged with the user’s environment. Hand and eye position data can both be tracked and gathered from the user through the Hololens.

The solution also requires little to no human assistance. The virtual environment guides the user and adapts to the user’s needs to allow them to operate autonomously.

WHAT MAKES OUR DESIGN SOLUTION EFFECTIVE

Ease of use is one of the main factors in the design of our solution. The system requires minimal setup and boasts a simple, concise interface that is easy for a new user to navigate.

Our solution was centred around allowing the user to operate autonomously, without the need for human assistance. We accomplished this by creating an adaptive, immersive system. The inputs the user interacts with will follow the user and adapt in complexity, position and orientation according to their needs.

We’ve thought of many use-cases for our user group and created solutions to accommodate them. This includes wide ranges of user mobility and user attentiveness.

The project also focused heavily on gathering meaningful data for future improvements. Through our tracked data, we are able to differentiate between intended input, actual user input as well as the progression of the user through the system.

HOW WE VALIDATED OUR DESIGN SOLUTION

Due to the ongoing pandemic we were not able to test on the intended end user group. However, we still opted to test on family members of the group and collect surveys to get an idea of the usability of the system. Although the answers may be biased, the data gives an indicator of user satisfaction with the system.

FEASIBILITY OF OUR DESIGN SOLUTION

This project was the first of its kind as we explore alternate solutions to those currently in place. At the moment, minimally verbal autistic students succeed best with a human assistant in class. Although this solution is effective, it is costly to hire an assistant throughout the whole student’s education. Our solution aims to be a cheaper alternative, only having to pay the cost of the system itself.

Partners and mentors

We want to thank our academic advisor, Dr. Krishnamurthy, for his guidance and support throughout the project, and for providing us with two HoloLens devices to develop our solution.